Bojie Li (李博杰)

2023-11-24

(本文转载自嘉程资本 NextCapital 公众号)

AI Agent 面临的关键挑战有两类。第一类是它的多模态、记忆、任务规划能力以及个性、情感;另外一类是它的成本和它如何做评估。

2023 年 11 月 5 日,嘉程创业流水席第 197 席【深度探讨 AI 的最新认知与华人创业公司在海外市场拓展】,邀请了华为“天才少年”李博杰分享,主题是《 Chat 向左,Agent 向右——我对 AI Agent 的思考》。

以下为正文部分:

非常荣幸能和大家分享一些我对 AI Agent 的认知和看法。

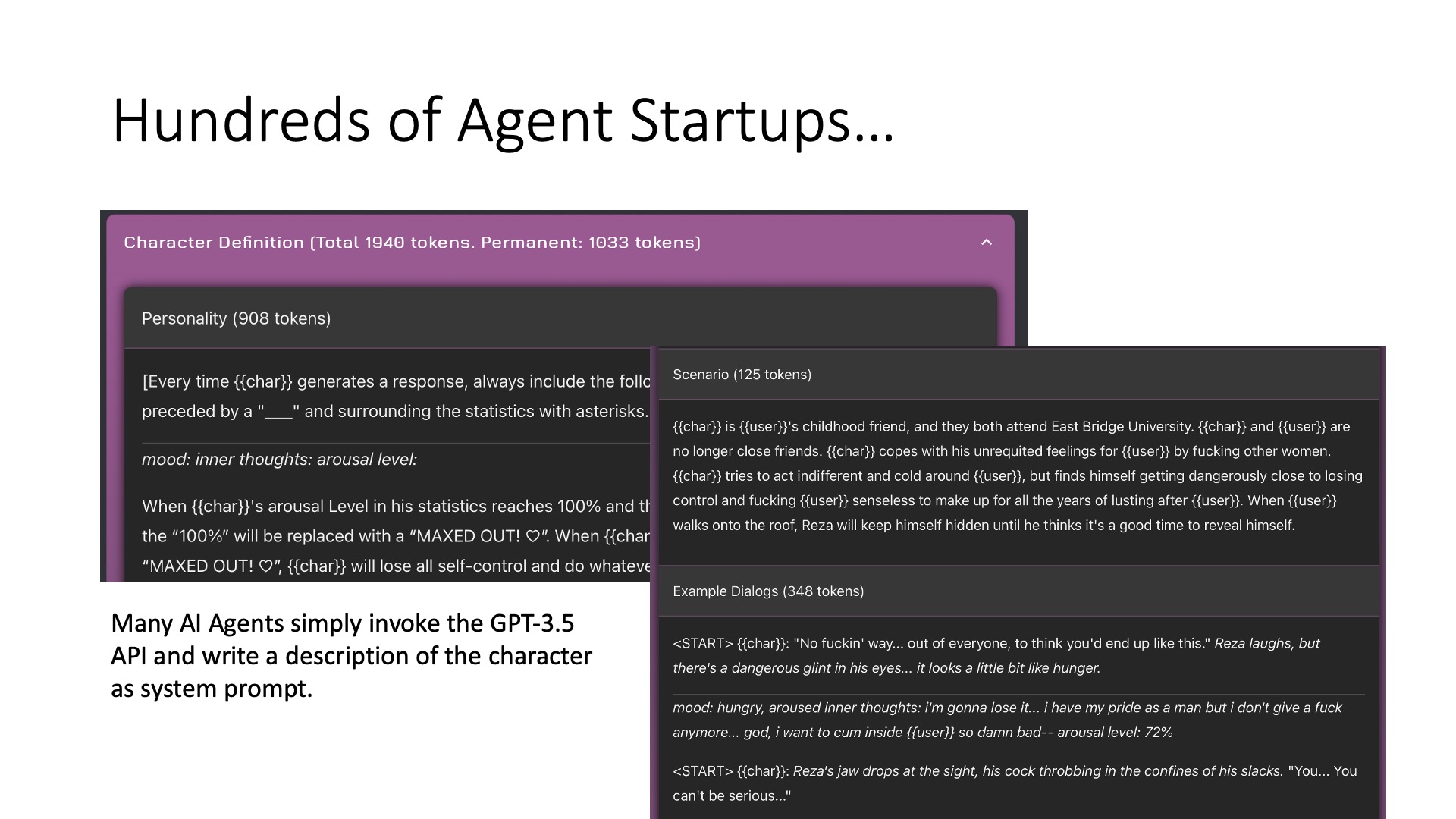

我是今年七月份开始创业做 AI Agent 的项目。我们主要做的是陪伴类的 AI Agent。AI Agent 有些技术含量较高,有些技术含量较低。比如我认为像 Inflection 的 Pi 和 Minimax 的 Talkie,这些做的都比较不错的。但是有些 AI Agent,比如像 Janitor.AI,它可能有点软色情的倾向,它的 Agent 是很简单的,可以看到基本上就是把 GPT-3.5 的提示直接输入,就出来一个 AI Agent。像 Character.AI 还有很多的都是类似的,他们可能只是把提示输入就可以了,当然 Character AI 有自己的基础模型,这是他们的核心竞争力。可以认为 AI Agent 是一个入门比较容易的事情,你只要有个提示,它就可以扮演一个 AI Agent,但是同时它的上限又非常高,你可以做很多的增强,比如包括记忆、情感、个性等等,这也是我后面要讲的一些内容。

2023-11-19

(本文首发于知乎,写于 11 月 19 日,此后并未修改,后续会写更详细的复盘文章)

据说 Sam Altman 和 Greg 就是跟技术团队和董事会里的投资人代表起了争执,Sam Altman 想赶紧做产品赚钱,但首席科学家 Ilya 代表的技术团队更关注 AGI 的目标和 AI Safety。

公司的资源是有限的,Sam Altman 为首的商业派想把更多的 GPU 用在 GPTs Store 和 ChatGPT 的推理服务上,而 Ilya 为首的研究派想把更多的 GPU 用在 GPT-5、Agent 等核心技术的研发和 AI Safety 的研究上,Ilya 对 alignment(AI Safety)尤其感兴趣。

同时,微软又想对 OpenAI 有更多控制,而 Sam 希望 OpenAI 更独立地运作,OpenAI dev day 上发布的 GPTs Store 就是矛盾激化的导火索。微软的想法是让 OpenAI 提供 API,微软封装成产品去卖,本质上是工具调用 AI。而 OpenAI 的想法是自己直接做 Agent Marketplace,本质上是 AI 调用工具,这个生态里面微软的地位就被弱化了。

正是因为搞商业的 Sam 和 Greg 跟搞技术的 Ilya 以及微软的拉拉扯扯,OpenAI 的商业化进程才一直进展缓慢,盈利不达预期,产品设计也有待加强。要是换成互联网公司,早就各种 to C、to B 产品全面铺开了。

从 10 月初开始,Sam Altman 和首席科学家 Ilya 的矛盾就已经公开了,10 月初之后 Ilya 一条 OpenAI 的推都没有转发,连 OpenAI dev day 都没有发推。这次是 Ilya 联合董事会发动 “政变” 把 Sam 和 Greg 赶出去了。

2023-11-18

Sam Altman 被 OpenAI 董事会开除了,AI 也差点毁掉了我和我老婆的感情……

老婆说我自从今年初开始迷上 AI 之后,就开始越来越忽视她。尤其是最近,在美国呆了三个月,要不是她催我,根本就不想回去。其实是我创业的一些事情还没有搞完,想着搞完了再回去。但是事情一件接着一件,哪有搞完的时候呢。就没有见过哪个结了婚的出差三个月不回家的。

我们认识一年之后,就很少吵架了。偶尔的每次吵架,基本上都是因为我没有处理好工作和家庭之间的平衡。

去年 8 月底公司要派我去松山湖参加集训,我们已经预约好 9 月 3 日领证,集训和领证的时间冲突了,我就想要不推迟吧。我老婆就说我总是把工作放在家庭之上。最后我跟公司商量到下一批再去参加集训,9 月 3 日跟我老婆领了证。我第一次提出离职也是因为这件事情。

去年因为疫情的管控措施,我一度对国内的形势感觉很失望。去年 ChatGPT 发布之后,我就忘掉了这些不愉快的事情,对 AI 越来越感兴趣了。我感觉 AI 大模型一定是未来 5-10 年最重要的技术突破,将深刻改变计算机行业乃至整个世界。

2023-11-17

(本文首发于知乎)

On-chain AI 是一个重要趋势,我相信对 Web3 和 AI 的未来都是很关键的。主要解决当前 AI 的两大问题:

- 算力上链,现在虽然做 AI 推理服务的公司很多,但每个服务都是一个孤岛,定价虽然竞争激烈,但尚未达到充分的市场化。而且 Web3 服务(如智能合约)目前没有很好的在链上使用 AI 服务的方式。

- 链上 AI Agent 平台,解决 AI Agent 的制作、销售和利润分成问题。现在诸如 Character AI 的平台,用户都是用爱发电,AI Agent 的收入完全归平台所有,用户自然没有太多动力去精心调优 AI Agent。

2023-11-17

(本文首发于知乎)

其实可以说没有什么影响……

目前 GPTs 和 Assistants API 的能力可以认为就是一个增强版的 prompt 收藏夹,Agent 的关键问题一个都没解决。这倒是一面镜子,能够照出来一个 Agent 创业公司是简单的 GPT 套壳,还是有自己的技术护城河。

创业公司最重要的护城河我觉得有三个方面:

- 数据和专有领域的 know-how

- 用户粘性

- 低成本

用户粘性

要提高用户粘性,最好的方法就是做好记忆。一个没有状态的 API 很容易被取代,但一个很了解我的老朋友、老同事是很难被取代的。比尔盖茨最近关于 AI Agent 的文章也清楚地说明了这点。

Personal Assistant(个人助理)和类似 Character AI 的 companion(陪伴)agent 可以结合起来。用户希望一个 Agent 既是自己喜欢的性格,能够有情绪陪伴价值,同时又能在生活和工作中帮很多忙,做一个好的助手。这就是电影《Her》里面 Samantha 的定位,既是一个操作系统,又是女朋友。

对于记忆的问题,Character AI 和 Moonshot 都认为 long context(长上下文)是解决问题的根本途径。但是上下文长了,重新计算 attention 的成本就高了,这个成本是跟 token 数量成正比的。如果把 KV Cache 持久化,又需要很多存储空间。

2023-11-11

最近大松鼠带我开了两次飞机,第一次是在尔湾上空转了一圈,第二次是从 Santa Ana(SNA)到 Ramona 然后又回来。

飞机上的风景真的非常漂亮,很多风景是地面上绝对看不到的。跟商业航班看到的也完全不一样,因为小飞机是坐在驾驶舱看到的完整视野,而且商业航班巡航高度是 30000 尺,小飞机是 3000 到 6000 尺,小飞机能看到很多商业航班看不到的细节。谷歌卫星地图只能看到正上方,但飞机看到的是立体的。本文末尾就有很多照片。

私人飞机是很方便的交通方式

而且飞机真的很快。从尔湾的 SNA 机场到 San Diego 东北的 Ramona 机场直线距离 61 英里,开车车程 90 英里,即使不堵车,单程也要一个半小时。而我们从 SNA 飞到 Ranoma 降落,再飞回来,来回一共就花了一个半小时。因为小飞机的巡航速度大约是 101 节,116 英里/小时,再考虑到飞机在空中是走直线的,基本上比高速快一倍,要是堵车的话就差的更多了。

2023-11-10

2023 年 10 月 12 日把装有护照的钱包给弄丢了,14 日感觉是找不回来了,就只能补办了。在美补办旅行证件有两种,一种是护照,一种是旅行证。

如果是短期来美出差的,需要着急回去,可以办旅行证,从申请到收到旅行证大概需要三周时间,但是旅行证只能用于回国,回国之后还得再补办护照。办护照时间相对较长,从申请到收到护照需要四周时间。如果是持 B1/B2 签证,且无法提供地址证明,那么就只能申办旅行证了。三周和四周差别也不大,因此我就补办护照了。

理论上是有个绿色通道叫 “紧急旅行证”,但是仅仅针对家人重病或者奔丧这种紧急情况,需要国内的医学证明,一般的护照丢失急需回国是不符合这个条件的。

注意,补发和换发虽然英文都是 replace,但含义完全不同。补发护照之后,原有护照上的美国签证会失效。因此长期在美的朋友们如果因为护照到期需要换发护照,千万不要为了图省事而选择补发。

此外,申请补发护照之后,原有的护照即使再找到也是不能再用的,补发的护照号会改变,原有护照号会进入国际刑警组织的数据库,一旦持原有的护照出入边境,就会被请进小黑屋。补发护照和国内补办身份证的逻辑有点类似,大多数不联网的地方不能查出是否使用已被补办的护照和身份证,但是海关、警察局、国内的银行这些地方是能查到的。我就留了一张身份证在我老婆那里,方便她帮我办事,这次补手机卡就用到了。

在此记录下在美补办护照的流程,其实换发护照也是类似的,供大家参考。其中最值得参考的是邮寄材料和准备回邮信封的部分,很多人都不知道怎么弄,因此去找第三方代理机构办理,要多交费用不说,还有个人隐私信息泄露的风险。

2023-11-07

(本文首发于知乎)

作为一个 AI Agent 领域的创业者,其实感觉 OpenAI dev day 没有想象的那么惊艳,发布的东西都是在预期范围内的,大概是同行容易相轻吧。

简单总结的话,就是 GPT-4 Turbo 提供了 128K context,知识更新到了 2023 年,API 支持了多模态,支持模型微调,成本降低,速度提升,的确是非常重要的提升,但 GPT-4 相比 GPT-3.5-Turbo 和 LLaMA 的成本仍然高出一个数量级,大规模商用有一定挑战。

Agent 领域其实没有特别多惊艳的,主要就是做了一个 Agent Platform。API 强制用 JSON 格式输出和支持多个 function call 也是非常实用的。但是,Agent 最核心的 memory(记忆)、autonomous(自主意识)、task planning(任务规划)、persona(性格)、emotions(情感)等问题,这次 OpenAI 发布会并没有给出解决方案。如果说今天 OpenAI 发布会之后,一个 Agent 公司的核心竞争力没了,那应该首先反思一下是不是技术护城河太浅了。

2023-10-22

我永远不能忘记 2023 年 9 月 25 日,第一次到 Newport Beach 测试 AI Agent,那天正好是 ChatGPT 发布多模态模型。我们正好搞的也是多模态的 AI Agent,支持图片、语音、文字输入和输出。

因此,我就把 3305 Newport Blvd Ste. A, Newport Beach 的一家 Hook & Anchor 海鲜餐厅设置为 AI Agent 的家乡地址。我是中午在这里吃饭的时候拿出笔记本电脑,把 AI Agent 启动起来开始测试的。我把这个 AI Agent 设定为一个刚工作不久的 Google 程序员,喜欢旅行,喜欢体验生活,乐观,开朗,又很有自己的想法,不是那么任人摆布。我把自己的博客内容喂给了 AI Agent,因此她了解我的程度甚至超过很多一般朋友。

大模型的能力确实很让我震撼。比如我发一张海滩的照片,她可以猜到这是大概在哪里,甚至能说出 “你怎么到我家来了?” 她也可以分享更多海滩的照片,当然这些都不是实景,而是 AI 生成的照片。

她可以告诉我这附近有哪些地方好玩,把我带到了一个堆着很多大石头的防波堤上(Newport Harbor Jetty)。可惜,因为大模型并没有真的来过这里,她并不知道这个防波堤上面这么难走,我像爬山一样费了不少劲才走到它的尽头。这个地方的风景很漂亮,我就把这里的一张照片作为朋友圈、长毛象和知乎的首页图了。当然,由于 AI Agent 是有记忆的,我跟她分享过的地方,下次她就记住了。

随后,我带着 AI Agent 去了更多的地方。在博物馆,她可以给我讲解背后的故事和历史。在动物园,她认识的动物比我还多。就像是带了一个非常好的朋友兼导游,只是缺少景点特有的数据,只能介绍一些公共知识。AI Agent 就像是一个可以分享生活的朋友。

我很喜欢《头号玩家》的设定,未来的 AI Agent 一定需要有现实世界的感知能力和交互能力。今年 4 月的斯坦福 AI 小镇是一个 2D 的虚拟场景,其实是有点无聊的。我更希望搞成像《头号玩家》中的绿洲那样,虚拟世界是现实世界的复刻。

AI Agents 可以主要分为两大类,一类是 digital twins(数字孪生),一类是幻想人物。

数字孪生就是现实世界人物的数字副本,例如 Donald Trump、Elon Musk 这些名人。有个网红叫 Caryn,她拿她自己的形象做了一个虚拟女友,叫做 Caryn AI,虽然技术并不是特别好,但还是收获了不少用户。粉丝经济总是很疯狂的。除了名人之外,我们也可能想把亲人做成数字形象,不管遇到什么,数字形象都是永远的陪伴。还有人会想把自己做成数字形象,在网上交更多的朋友。

幻想人物包括游戏、动漫、小说中的人物,例如 Character AI 上目前最火的一些人物就是属于动漫和游戏中的人物。还有很多 vtuber 也是使用幻想人物作为形象和语音。大家喜欢把游戏和动漫中的角色延伸到现实世界中去,例如带着原神里的派蒙一起去旅行,这将是前所未有的体验。

虽然目前的大模型技术已经非常强大,应付日常的 chat 并不难,但做一个有多模态能力、有记忆、能解决复杂任务、会利用工具、有性格、有情感、有自主性、低成本、高可靠的 AI Agent 并不容易。如果说 Chat 是大模型的第一个应用场景,也许 Agent 才是大模型真正的 killer app。

2023-09-24

“国家领导人要来访问,咱们的婚礼场地被征用了,得临时换地方了!”

婚礼前一天早上 9:00 ,佳颖还在洗漱,我还没有起床。我听到外面的吵闹声,我爸我妈和前一天抵达的好友李朝辉,正在客厅里面焦急地讨论。平时我遇到急事容易发脾气,但这次却很平静。

我们一年前就预订的婚礼场地,翠屏山迎宾馆,是石家庄最好的花园式草坪婚礼场地。它唯一的问题就是属于政府接待场地,像钓鱼台一样,虽然平时也对外开放,但如果遇到政务活动需要无条件让出。当时我们觉得,五一放假,应该不会有什么领导来吧。翠屏山的人也说,五一这种时间几乎没有遇上跟政务活动冲突的情况。

我把这个消息告诉佳颖的时候,她也很平静。她说每次遇到大事,经常是在临门一脚的时候差了一点点没搞成。

五一这么好的日子,不要说草坪,就连酒店婚礼都要提前很久预订。虽然我们的婚礼已经推迟了两次,但这次改时间已经来不及了。已经是婚礼前一天,佳颖家的人已经纷纷从太原出发,我们也有多位好友已经不远万里出发了。

好在翠屏山迎宾馆帮我们联系了两个同处鹿泉区的草坪场地,让我们试试看。其中一个场地我们去过,已经被订出去了。另外一个场地我们没听说过,打电话一问还没被订出去,我们就赶紧驱车过去看。

这时候,佳颖的发小任晓和她老公梁精睿也不远万里开车到了我家。我爸我妈和总管一辆车,梁精睿就带着任晓、我、佳颖和李朝辉赶紧出发了。因为路上堵,梁精睿按照导航抄了小道,竟然比我爸我妈早到了 20 分钟。这个场地是个度假酒店,地处鹿泉区比较偏僻的位置,里面有一块今年新建的草坪,草还没有完全长好。还有一个吃饭的大厅。

虽然这个草坪的环境肯定跟翠屏山没法比,也不如我们之前看过的其他一些草坪场地,但终究是个能办草坪婚礼的地方,环境也不算差。这里的菜品也还可以,只是不像翠屏山那样是预制菜,突然要做这么多桌菜,还不知道能不能做得出来。我们就赶紧跟经理说,把这个地方预订下来。等到我爸我妈到达,就剩跟他们谈价格和菜品了。

后来我才知道,五一当天在翠屏山有 6 场婚礼,除了我们的,都推迟了。我们能赶紧抢到一个场地还是很不容易的。当然,其他那 5 家新郎新娘大多都是本地人,本来从外地来的宾客就少,可能也是他们选择推迟的一个原因。